Investing in Startups at AI's Point of Recursion (Part 2)

- A recent blog by John Spindler, General Partner of Twin Path Ventures -

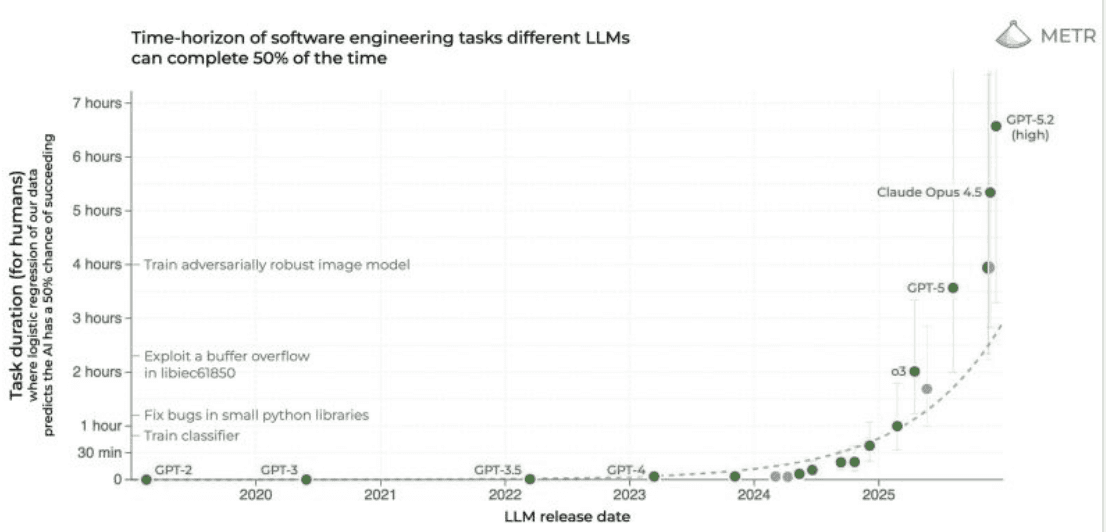

In the previous blog I stated that Twin Path Ventures is now signed up to view that the “Point of Recursion” in AI or “Recursive Self-Improvement” (RSI) is close. To revisit the Eric Schmidt quote from the previous blog “The ability for computers to write programs, to generate mathematical conjectures, to discover new facts, looks like it's very very close”. This month the launch of Claude Opus 4.6 spooked the markets into thinking, if RSI has not yet quite arrived, with the latest LLM powered code generating agents, we are getting very close. This realisation saw a big fall in software stocks as the rise of this generation of AI is now seen as producing a big negative impact not only on the growth prospects of existing software platforms but even their ongoing existence. Never mind the financial valuations and returns, of large parts of the whole software sector and the VC’s that have financed it.

There is now a big debate raging online and in blogs on what this means. My favourite take is from my good friend Nare Vardanyan, the founder of Ntropy ( one of my previous investments) whose blog “AI is eating enterprise software- but not the way you think” is a must read. You do not have to buy into the cries of “doom, we are all doomed”, from Jason Lemkin on the latest 20VC podcast to think that everything is now different ( probably not in a good way) for both founders and their investors. In particular, it is hard not to be overwhelmed by the speed of change or to be intimidated by the prodigious output and world conquering ambitions of the major “Frontier Labs” especially the big three of Open AI, Google Deepmind and this months behemoth Anthropic.

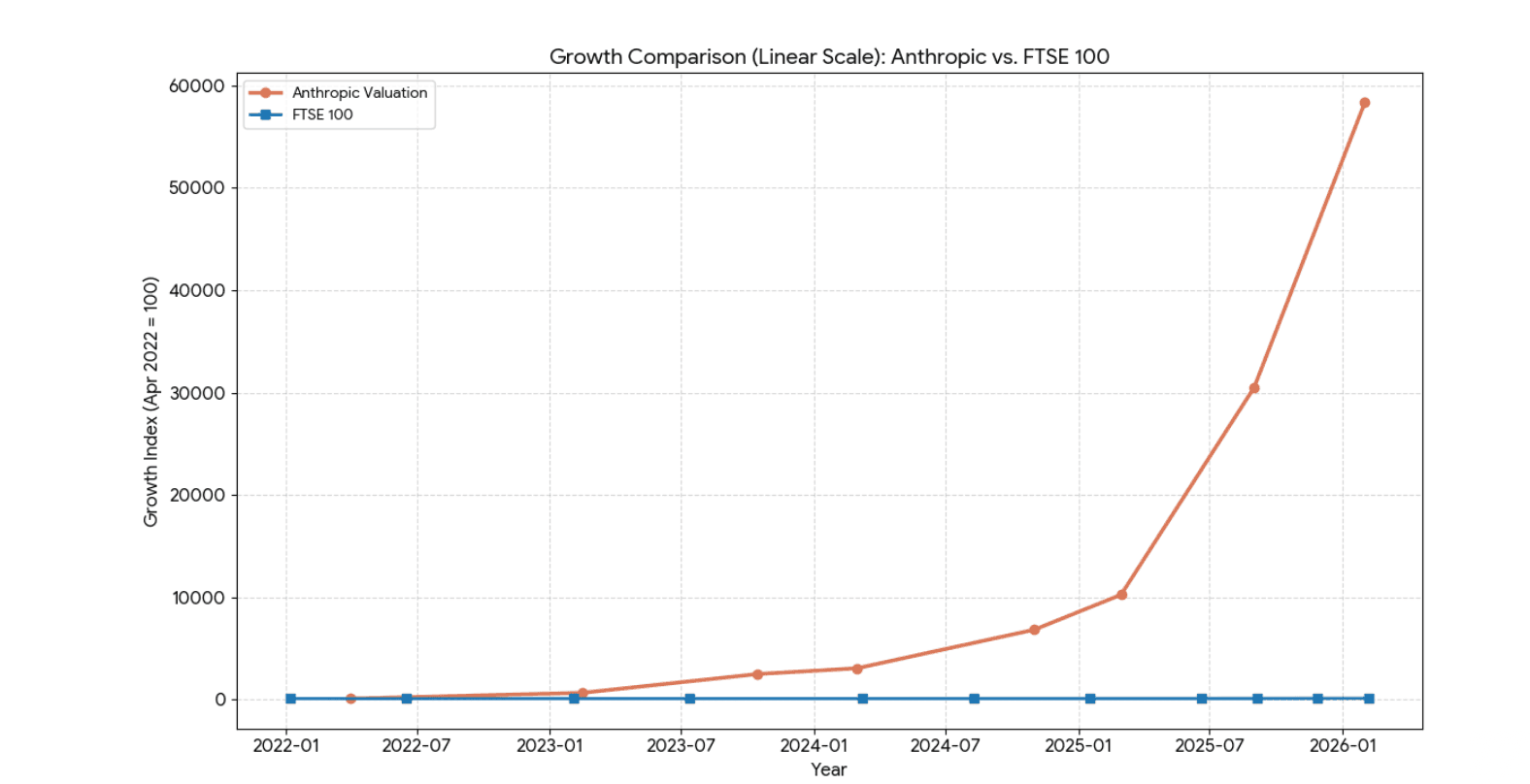

[ If in an alternative universe, Anthropic was a UK company it would be the most valuable company in the UK by a considerable way. Its valuation of £270billion would be almost 30% bigger than either HSBC or AZ, the present biggest UK companies and this is for a 5 year old company that in 2023, with virtually no revenue, was ridiculed for raising VC money at valuation of around $4 billion dollars. Makes you think what Deepmind would be worth if it was a UK standalone company but also shows why investing in the next generation of Frontier Labs, those with the talent and focus to take on Anthropics crown, at so called ludicrous valuations, may in fact be very good investments- even as a portfolio hedge].

Even for myself, who has been investing at seed stage in AI first startups since 2014 (and for Twin Path Ventures who have invested in over 30 AI First startups in less than 3 years), it is super interesting and exciting times. We as investors need to keep on our toes not to be left behind or to find ourselves backing startups that cannot adapt- that become victims to the pace of change. This big risk is somewhat mitigated because virtually all our portfolio startups we invest in are founded by world class computer scientists. They are ( through our investment and encouragement) using their skills and know-how to build startups at the frontier of AI, incorporating the latest models and techniques from the frontier labs whilst building and launching some of their own proprietary innovations. To exemplify this, last week we heard from a portfolio startup who in the next 2-3 months will launch the world's first RSI agent, whilst another frontier portfolio startup has been whitelisted to have exclusive access to the latest models by one of the major LLM’s. Our portfolio startups are not passive but actively engaged in the race to Recursion whilst building solutions that solve their customers problems.

But as the pace of R&D in AI keeps on accelerating, we as a fund are adapting so we can invest in startups that can take full advantage of what is coming.

So what do we know about the progress to the point of recursion? We know that the transformer model powered by the best GPU’s to do enormous amounts of parallel matrix multiplications on billions of data points held in mind boggling big context windows has on a multi modal basis ( language, vision, sound) matched human performance on numerous cognitive tasks. We know this scales ( unbelievably well). We know that the last 18 months the major improvements in LLM’s performance has been from post training and test-time compute optimisations. We know that since the launch Open AI 01 reasoning models have reinforcement learning trained LLM’s to system think, auto-correct and be optimised for test-time compute- all of which have generated the big improvements which are now powering the rise of agentic AI.

We know there are still issues with reliability, robustness and trust and that the costs are/ can be exorbitant and the economics often seems to eat more cash than they produce, but whilst some ( as we discussed on the previous blog) believe these are fundamental conceptual problems with LLMs, others ( including me) believe these issues are engineering challenges that each day, step by step, keep getting improved upon by vast army of AI scientists and data engineers.

We know that memory is still a big issue ( although Google Titan Miras system for giving LLM’s some form of memory that mimics human seems promising). We also know that some of the tooling such as RAG does not work at scale ( hence the reason Twin Path invested in Page Index - do check them out) but this apart, the biggest issues are that LLM can, for the most part, only inference on data they are trained on and held within their albeit super large context windows, limiting their ability to discover anything fundamentally new- "the unknown-unknown".

LLM’s are not able to make causal inferences like humans on data they previously have not seen and are unlike humans, not so far able to generate and make informed decisions and continuously learn from small amounts of example data at test-time. The realm they can operate on is also limited to where baseline training data is easily accessible ( and even in the digital world they may be running out). Also where the operating environment is complex, causally interactive, stochastic and where proof is empirical and scientific, then the simulation-to-reality gap is a real and persistent challenge. The consensus is that we will need to develop new AI architectures to solve these challenges, whether this be world models or physic based models or “liquid brain” paradigms. These new innovative architectures will be needed to be used to supplement or replace LLM architecture in certain cognitive tasks in certain environments if they are ever to effectively work alongside humans, nevermind replace them. So we, like many, are now talking about achieving “super intelligence” on certain tasks and in certain realms as opposed to AGI. Based on this we think there are lots of opportunities for startups to out-compete big tech. Our informed working hypothesis is that there will be many realms and uses with different points to recursion for AI. Not a winner takes everything.

So based on this conviction, our insights and first hand observations as investors in this space, we believe there are 5 types of startups to back in the race to the point of recursion.

New Frontier AI research Labs focused on achieving RSI. Well resourced teams of world class experts can still out compete big tech in reaching the point of recursion. Watching the Thinking Game over the holidays reminded me that achieving innovation breakthroughs tends to be in the realm of small, highly motivated, well led and extremely well resourced teams. The Alpha fold team in the documentary was a small team within Deepmind which is in itself a small team with Google. Small extremely well resourced/ super smart focused teams can win the race to Recursion. They know it, big tech knows it, and if the valuations are mind-blowingly high, the impact will be enormous if they achieve RSI. Next month we hope to announce that we have made an investment in one such major new Frontier Lab and we are getting sight on many more. For this end, London being one of the world research epi-centers for AI is a massive advantage for a fund such as ours who has such deep networks in the AI sector in the UK.

Unique data platforms. Not all data is already in LLM’s super enormous context windows. Some data is generated in real time, highly dynamic, from the real noisy world. Although it is true the latest Teacher- Student Distillation methods are generating lots of high quality synthetic data for the LLM’s to be trained on, when it comes to many specific, dynamic, use cases, synthetic data still needs core real world baseline data that is empirically true/ factual and that can be used to reference and anchor. So startups able to access and generate this unique data, data that can be filtered, combined with other multi-modal datasets and amalgamated to generate some new and interesting data points, that in turn can be used as an environment to train up/fine tune models able to hit recursion points in specific verticals and use cases will become super useful. This data (outside LLMs context windows) has probably never been more valuable. Platforms that have this data and just as important the infrastructure to make it easy to extract, transform and load this data, will be able to exploit, and not be overwhelmed by, AI models that hit the point of recursion. We recently invested in a data platform for robotics, physical infrastructure and health monitoring that fall into this category. Our past investments in the likes of Treefera and Deep Mirror show that there are new data platforms where customers initially come (often with their own data that is supplemented with more data by the platform) to generate new data points, new predictions, discover new insights but then stay for the infrastructure and data amalgamation. Finally, we are investing in data platforms that, (partly due to their patent protected advances in sensor and vision technologies), are able to extract completely new data sets that the latest AI can structure, feature extract, categorise and if you know what you are looking for, predict outcomes. Startups such as Sentinel 4D that can observe and track the morphology of a cell ( how it behaves over time in dynamic 4D) to predict disease progression and therapeutic efficacies or Sention that can use patent protected proprietary sensors to look inside battery cells as they are formed and use AI to predict future cell performance. AI transforming the physical world through new data and new prediction capabilities. Even more likely to be impactful in the world of RSI.

Human in the loop AI applications. If you believe that some of the obstacles to achieving recursion point are engineering challenges around making LLMs (and the applications built upon them) work with humans, in limited non-ideal feedback environments, then these are challenges where startups can flourish. Going deep into company/ client work flows and task environments to understand them. Taking this understanding to assemble the data and create specific engineered solutions ( probably agentic) for specific verticals, or use cases where AI models can self-improve, and where trust, when confined to specific tasks in specific environments, is more likely to be gained from humans. Being experts in the latest AI models and techniques whilst having deep understanding of the organisational/ human system in order to design and deliver the best solutions will be the future of enterprise deployed AI. Some like Nihar Bobba go further believing that vertical AI startups need to take on the liability and become more full service providers. This is probably the direction of travel.

A more pressing concern is the likely persistence of “O” ring problems, which means what AI can’t consistently automate without fault, becomes not just the weakest link in the chain of tasks, but the most expensive to fix, the link that requires human judgement. This can be where startups need to use their expertise as leverage to build and deploy bespoke solutions that understand where the “O” ring step is and how to manage through it. Heavily regulated industries where all decisions need to be explainable and actions can need 3rd party approval, are obviously examples of verticals ideal for AI First startups looking to exploit, and not be over-welmed, by RSI capable agents.

To replace some humans whilst giving super powers to other humans within an enterprise will require startups to demonstrate high levels of performance and trust. It will help if the founders are already trusted industry insiders that understand the client issues and challenges deeply. Being the “first to be trusted" can probably only come from working with clients over a period of time through initial POCS, to proof of value pilots, to full platform contracts, but once that trust and track record of delivery is achieved then these enterprise AI startups can start scaling super quickly. This is what we are seeing with portfolio startups such as 5th Dimension and Azoma and we are seeing signals that some other of our portfolio are following similar paths.

In our view the time to back thin-skinned AI platforms that scaled super quickly, through beautiful UI/UX and lots of venture cash to fund aggressive go-to-market campaigns are probably over. The frontier models own offers just got too good to use, whilst the market is now full of copy cat wrapper solutions making it very hard for a new skin on an LLM to cut through the noise. We believe for enterprise AI, investor patience is required to fund the somewhat laborious period it takes for startups in the enterprise space to prove to clients that the AI first startups AI solution can be trusted to deliver substantial value. In investing in Enterprise AI Startups timing is everything and for Twin Path Ventures we will invest in vertical A startups to use the runway get in a position to be able to achieve 10X sales growth.

4. De Novo Discovery Engines - The Sim-to-Reality gap is real and persistent. If AI is to solve the big scientific and engineering challenges in the physical world in robotics, in biology, in chemistry etc then it will need teams building platforms that can create sim-to-reality continuous learning loops that includes in-world physical verification and scalable replication. For instance AI for drug discovery will need wet labs to empirically validate results and provide new data for the AI engines to work on. A lab-in-the-loop that quickly and expertly validates the simulation data, that thereby enabling the de-novo discovery capability to speed up and compound. In many of these cases these startups will be creating both AI powered De Novo discovery platforms and IP protectable and licensable assets. The paradox is that we believe that patentable IP will become even more difficult to secure and defend in this AI led discovery world whilst still remaining, at least in the physical world, even more valuable. The Twin Path Team having a partner that was a patent attorney in a top tier law firm is a big advantage in navigating this challenge. Consequently this has enabled us to invest in the likes of HotHouse , Amply, Pentabind and Mater-AI that are LLM based De-Novo discovery engines and we would like more.

5. Frontier AI exploring new AI Architectures - Investing in the next big thing ready for the next paradigm shift. The Frontier of AI, where LLM’s and their infrastructure cannot go without new additional "outer compute" innovations and where points of recursion at present are least likely to happen. High risk but high return investments, but since these AI scientists will be exploring unknown-unknowns partly using the latest in LLM, that may get to RSI soon, then the likelihood of finding and exploring new AI paradigms becomes more likely and investing in them less of a lottery. Research into World Models, Continuous Learning Adaptive Models, Neuro-morphic -LLM hybrids, Liquid brains, physics based recursion models, all have had a boost from the success of transformer based models. The breakthroughs in LLM’s are leading by example in what can be done in associated research areas and the impact they can create. Not our core thesis but one at Twin Path we are willing to explore.

There are obviously more types of AI startups that we expect to make a big impact especially in 2026. For instance, I am more than hopeful that in 2026, startups and enterprises will pay more than lip service to the importance of evaluation, data input curation, inference optimisation, AI safety, AI alignment and intuitive tooling that actually make the promise of AI work in practice to its full potential and without risk of harm.

Interested in finding out more ? We are hosting an event on the evening of March 11th to launch Fund IV. This fund will invest through SEIS and EIS into AI-first startups for the 26/27 financial year.

Register here: https://luma.com/9ft1e2ti

Note: This event is strictly for High Net Worth or Self-Certified Sophisticated investors who meet the criteria for elective professional client status. Post registration, we will need to verify your status prior to attendance or on the night of the event.